Layered navigation is the set of filters down the side of a Magento category page: size, color, price, brand, and whatever else your catalog supports. Set up well, it speeds a shopper to the exact product they want. Set up badly, it floods Google with thousands of near-duplicate filtered URLs and quietly drains your crawl budget. Most Magento stores get the shopper-facing half right and the SEO half wrong.

This guide covers both halves: how to configure layered navigation in Magento so it actually helps people shop, and how to handle the filtered URLs it generates so it helps your SEO instead of hurting it, using Google’s current guidance rather than the advice that was standard a few years ago.

Overview

- Layered (faceted) navigation helps shoppers filter a category to the products they want, and on-site filtering is strongly linked to higher conversion.

- Its SEO risk is crawl and duplication: every filter combination can spawn a crawlable URL, wasting crawl budget and creating near-duplicate pages.

- Google’s current advice is to block most filtered URLs from crawling, which reverses older guidance that warned against using robots.txt for this.

🚀 Quick takeaway

Treat layered navigation as two jobs. For shoppers, make filters fast and relevant. For search, decide deliberately which filtered URLs Google may crawl and index, and block the rest. The stores that get burned are the ones that let every filter combination become a crawlable page.

What is Magento layered navigation?

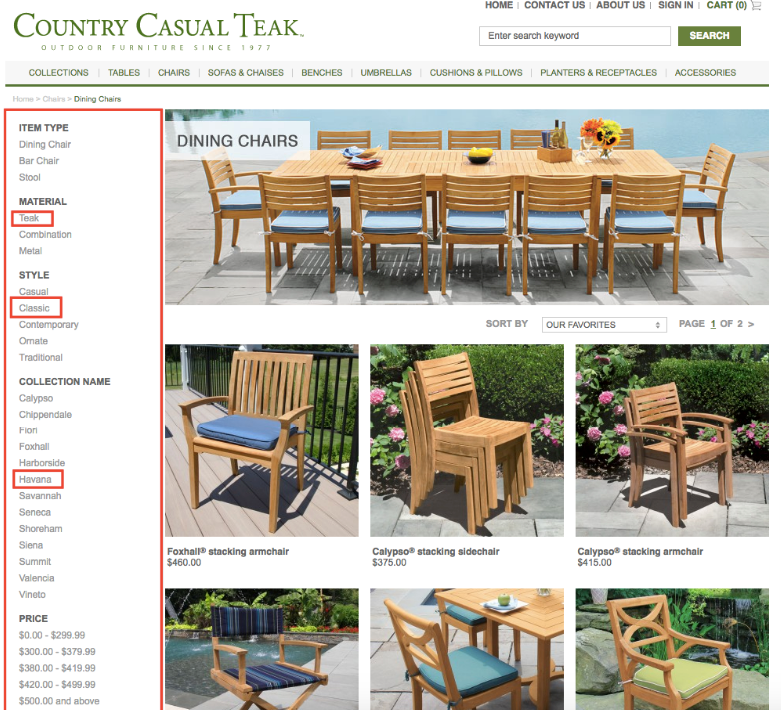

Layered navigation, also called faceted navigation, is the filtering panel on a Magento category or search-results page. It lets a shopper narrow a large catalog by attributes such as price, size, color, or brand, and it is one of the most-used features on any store with more than a handful of products.

In Magento it is driven by product attributes. Any attribute you mark as filterable, on an anchor category, can appear as a filter. That flexibility is the strength and the trap: it makes a great shopping tool and, left unmanaged, a sprawl of URL combinations search engines try to crawl.

How to set up layered navigation in Magento

The shopper-facing setup is straightforward and worth getting right before any SEO work:

- Mark the right attributes as filterable. In the attribute settings, set “Use in Layered Navigation” only for attributes shoppers actually filter by. More filters is not better, relevant filters are.

- Enable the category as an anchor. Anchor categories show products from sub-categories and activate layered navigation, so the filter set is complete.

- Configure price navigation. Choose automatic or manual price steps that match your catalog, so the price filter offers sensible ranges rather than awkward ones.

- Consider AJAX filtering. Out of the box, each filter click reloads the page. An AJAX layered-navigation approach (native on modern frontends, or via an extension on Luma) updates results in place, which is faster and feels better on mobile.

🚀 Quick takeaway

Only make attributes filterable if shoppers actually use them. Every extra filter multiplies the URL combinations search engines try to crawl, so a lean, relevant filter set is better for both shoppers and SEO.

The SEO problem with layered navigation

Here is where most stores get into trouble. Every filter a shopper can click is a URL a search engine can try to crawl, and filters combine. Five filter values across three attributes is already dozens of URL permutations per category, and a large catalog turns that into thousands or millions of low-value, near-duplicate pages.

Two problems follow. First, crawl budget: search engines spend their limited crawl allowance on filtered junk instead of your real pages. Second, duplication: filtered pages are often near-identical to the category and to each other, which dilutes the signals that should concentrate on the pages you want to rank. Our guide to duplicate content covers that side in depth, and our Magento SEO tips cover the wider technical setup.

🚀 Quick takeaway

The danger of layered navigation is not the filters themselves, it is letting every filter combination become a crawlable, indexable URL. Decide which filtered pages have real search demand, and treat the rest as crawl waste to be controlled.

How to control crawling of filtered URLs

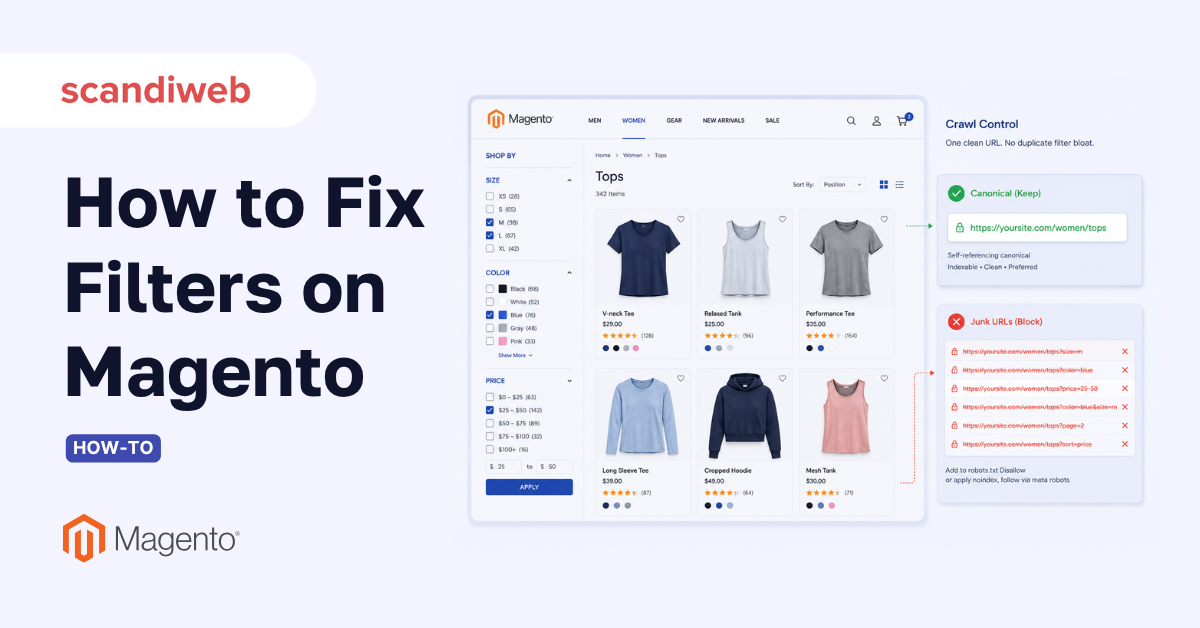

This is where current guidance differs from the advice many older Magento articles still repeat. Google’s Search Central documentation now recommends blocking the filtered URLs you do not want crawled, and is explicit that, in most cases, there is no good reason to allow crawling of filtered navigation. The practical toolkit:

- Use robots.txt to disallow filtered URL patterns. This is now Google’s recommended primary control for faceted navigation, the opposite of older advice that warned against using robots.txt here. Disallow the query-parameter patterns your filters generate so they are never crawled.

- Use rel=”nofollow” on facet links where appropriate to discourage crawlers from following into the filter sprawl.

- Reserve canonical tags for genuine near-duplicates you still want crawled. Google treats canonical as a hint, not a directive, and considers it less reliable than robots.txt for managing crawl at scale.

- Keep a small set of valuable filtered pages indexable only where there is real search demand (for example, a popular “brand + category” combination), and let those be crawled and optimized deliberately.

Note two things that have changed: Google retired the Search Console URL Parameters tool, so any advice telling you to configure parameters there is out of date, and rel=”prev”/”next” is no longer used by Google for pagination. Build your approach on robots.txt and deliberate indexing, not on those deprecated tools.

🚀 Quick takeaway

The rule changed. Google now wants you to block filtered URLs from crawling with robots.txt, not lean on canonical tags or the retired URL Parameters tool. If your SEO setup predates that, it is probably doing the opposite of what Google now recommends.

Layered navigation and Core Web Vitals

Filters are also a performance issue. A full page reload on every filter click is slow, especially on mobile, and slow filtering both frustrates shoppers and drags Core Web Vitals. AJAX-based layered navigation updates results without a full reload, which is faster and smoother.

This is one more reason modern Magento frontends matter. A lightweight frontend like Hyvä ships fast layered-navigation components and strong Core Web Vitals by default, where the legacy Luma theme often needs an extension and tuning to get there. If you are improving navigation, it is worth looking at the frontend at the same time.

🚀 Quick takeaway

Slow filters cost you twice: shoppers abandon, and Core Web Vitals suffer. AJAX filtering that updates results without a full page reload fixes both, and a modern frontend gives you that out of the box rather than as an add-on.

Layered navigation best practices

Pulling it together, a well-run Magento layered navigation looks like this:

- Only filterable attributes shoppers actually use, on properly anchored categories.

- Sensible price steps and, ideally, AJAX filtering for speed.

- A deliberate decision about which filtered URLs Google may crawl, with robots.txt blocking the rest.

- A small, intentional set of high-demand filtered pages kept indexable and optimized.

- Regular checks in Search Console that crawl is going to your real pages, not filter sprawl.

How scandiweb approaches Magento navigation and SEO

scandiweb has delivered over 2,100 eCommerce projects since 2003, and faceted navigation is one of the most common places we find a store both losing shoppers and leaking crawl budget. Our approach treats it as one problem with two sides: a Magento SEO pass that brings filtered-URL handling in line with current Google guidance, and a conversion rate optimization pass that makes the filters themselves fast and relevant. Done together, navigation stops being a liability and starts earning rankings and conversions at the same time.

Frequently asked questions

What is layered navigation in Magento?

Layered navigation, or faceted navigation, is the filter panel on a Magento category or search page that lets shoppers narrow a catalog by attributes such as price, size, color, or brand. It is driven by product attributes marked as filterable on anchor categories, and it is essential for any store with a sizable catalog.

Does layered navigation hurt SEO?

It can, if left unmanaged. Filters combine into many near-duplicate URLs that waste crawl budget and dilute ranking signals. Managed properly, with most filtered URLs blocked from crawling and only high-demand ones kept indexable, layered navigation helps both shoppers and SEO.

Should I use robots.txt or canonical tags for filtered URLs?

Google’s current guidance favors robots.txt to block the filtered URLs you do not want crawled, and treats canonical tags as a less reliable hint for crawl management at scale. Use robots.txt as the primary control, reserve canonical for genuine near-duplicates you still want crawled, and keep only a deliberate set of high-demand filtered pages indexable. This reverses older advice that warned against robots.txt for faceted navigation.

How do I set up layered navigation in Magento?

Mark the attributes shoppers filter by as “Use in Layered Navigation,” enable the category as an anchor so filters show products from sub-categories, configure sensible price steps, and consider AJAX filtering so results update without a full page reload. Then decide which filtered URLs search engines may crawl.

How do I make Magento filters faster?

Use AJAX-based layered navigation so each filter click updates results in place instead of reloading the whole page, which is faster and smoother on mobile. A modern, lightweight frontend such as Hyvä provides fast filtering and strong Core Web Vitals by default, where the legacy Luma theme usually needs an extension and tuning.

Not sure whether your filters are helping or quietly hurting your rankings? Audit your Magento SEO with us and we will check how your layered navigation is being crawled and indexed, then fix the setup on both the shopper and search sides.

Share on: