Part one: Infrastructure

Microservice architecture (MSA) is becoming increasingly prominent in Scandiweb’s projects. Primarily used in our blockchain endeavours, it’s seeping into other ventures as well. To put it briefly, microservices is a technique/style of software development, that structures an application as a collection of loosely coupled services, which contrasts with the more traditional, monolithic style.

In what follows, we offer a case study of a successful MSA — our very own Publica.com, the publishing cryptoproject!

This article is divided into two parts. In the first part, we’ll take an in-depth look at the infrastructure — the components (Terraform, VPC and subnets, load balancers, autoscaling groups,…), their functionalities and the challenges they solve. In the second part, our focus is on the services and microservices themselves. We’ll explore the purposes of individual MS functionalities, and highlight their significance in the Publica system.

About the project

Publica is an innovative project, which turns ebooks into cryptocurrency — every book on the platform has its own token and smart contract. If you own a book’s token, or BOOK COIN, as we call it, then you can also read it — Yes, read your token! Our wallet is coupled with an e-reader and, when a token is stored in the wallet, the system gives access to the book’s ePub file.

We also run BOOK CROWDFUNDING campaigns — since books function as tokens, we can easily presell a book by selling the token and raising funds for the production of a quality title. When a book is eventually written and uploaded, token holders will be able to read it and send tokens to their friends, so they can read it too! One can even trade the tokens — re-selling or exchanging the book.

Publishing through Publica is borderless, transparent, without any DRM, EULA and geofencing. If you’re as excited about this as we are — check out the Cryptonauts video about the Publica platform — they even published their own book during shooting!

Why a Microservices Architecture?

Using microservices has numerous benefits and only a few downsides — overall we believe it to be the right approach for building large-scale applications with extensive functionality if you want to deliver quickly and regularly.

Last November, when we did Publica’s proof of concept, we had a couple of important revelations:

First — there are only JavaScript open source libraries for communicating with Ethereum nodes. This meant we would have to create a node.js app, if we didn’t want to run the communication with blockchain through the front-end, thus having the Ethereum node exposed to world, which is a crypto faux pas.

Second — Our team had various skill levels — a mix of node.js gurus and people who mainly work with php applications. There are benefits to using frameworks like Laravel over node.js for some functionality as well. For instance, Laravel have Eloquent which makes interaction with the database a breeze, while node.js prefers noSQL, which is not that great for flat table structure, has performance issues with a lot of data (1M+ records) and does not have active record libraries which could compete with Eloquent.

The concise answer is this — using MSA is safer and developmentally friendlier. It also permits continuous delivery, efficient scalability among numerous other benefits.

Infrastructure

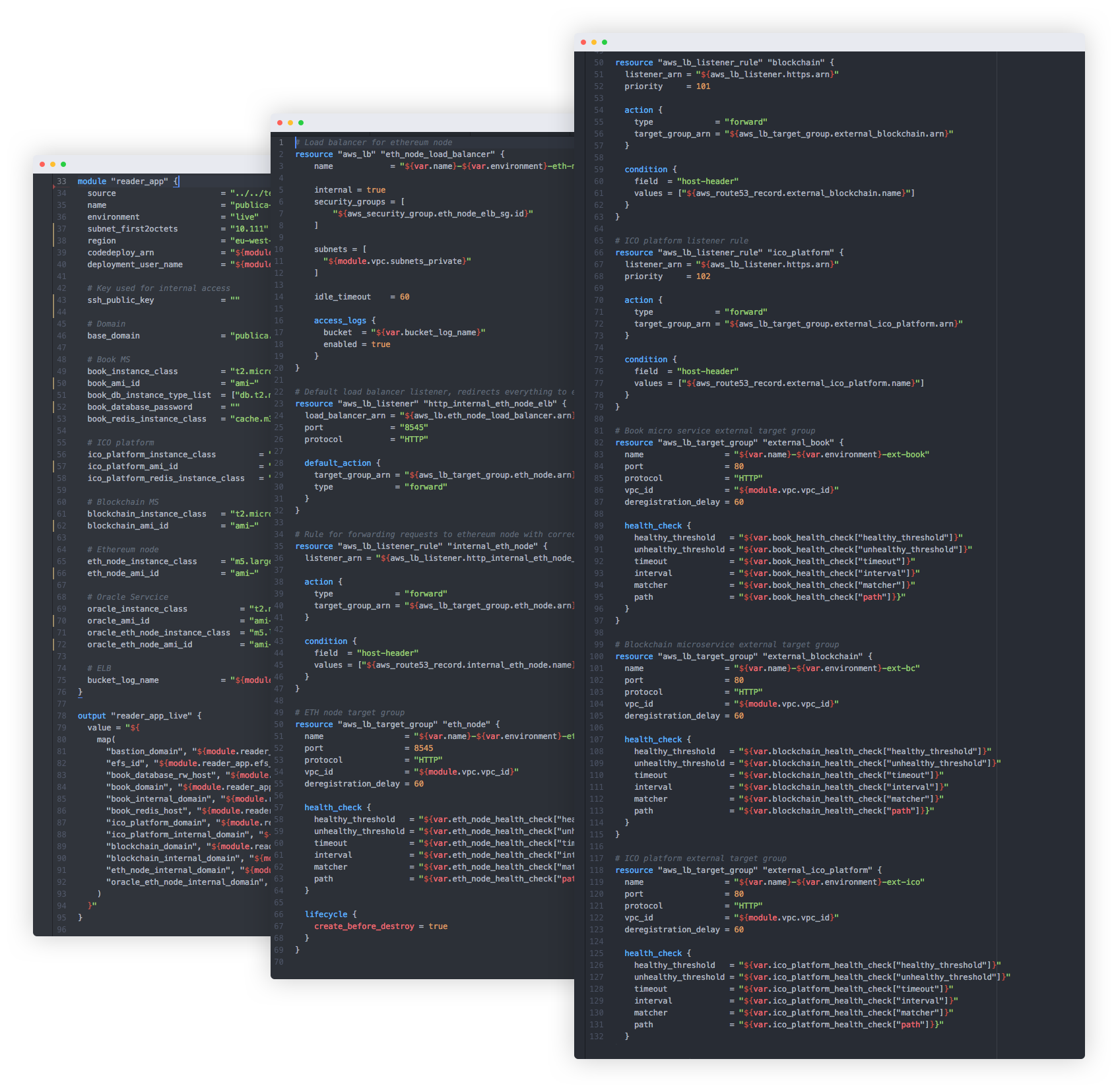

Terraform

Our infrastructure is provisioned with Terraform. Terraform allows us to quickly change and keep track of changes in codebase format, rather than the web UI in AWS website. The best part is that we can write reusable modules. In fact, our whole infrastructure is a module that accepts several parameters, which is great for multiple environments, such as STAGING, DEVELOPMENT and LIVE.

We just pass different values to the module and several hundreds of resources are made exactly the same as for other environments — it takes human error and lengthy debugging completely out of the equation. Changes are implemented in the same manner — update the module and apply the terraform plan for all environments, and they all will be updated identically.

VPC and Subnets

Public subnet

Our infrastructure consists of 2 groups of subnets. The public subnets are accessible from outside, gated by Bastion host as a single entry point into the infrastructure where user authentication takes place.

Private subnet

The private subnet is where everything lives, protected from the outside world with very restrictive access, allowing only HTTP traffic from load balancers located inside the public subnet. This layer provides additional security, since there is no direct way to do anything more than sending indirect HTTP requests through the load balancer that is located in the public subnet.

Load balancers

External Load Balancer

The external load balancer has 2 purposes — it serves as a router to direct traffic from outside (mobile applications, website) to the corresponding microservice and as a TLS termination proxy.

Requests from outside come in as HTTPS, the external load balancer terminates the TLS certificate and any further communication to private subnets from the external load balancer happens through plain HTTP, since it is not necessary for internal communication. This allows us to have SSL only in places where it is required and save additional resources where it is not.

Internal Load balancer

Microservices talk to the outside world and to each other as well. The internal load balancer in our application solves several things — it allows microservices to speak between themselves never leaving the private subnet, and of course as a router, so communication is routed correctly.

Single Purpose Load balancers

We also have single purpose load balancers. Their role is mainly to provide an additional security layer by using security groups on top of the Ethereum node and routing requests to the Geth client.

We use these load balancers for our oracle service and blockchain microservice so only these 2 applications are able to speak with the Ethereum network and they, and only they, can connect to the Geth client. These load balancers, of course, are exclusive for the private subnet.

Autoscaling Groups

In our infrastructure, autoscaling groups work as a failsafe and scaling solution. Each microservice and application has its own group, so, if they are experiencing increased traffic and require additional resources, an additional instance is created inside the autoscaling group to increase capacity — this is implemented via CPU usage scaling alarms.

One of the biggest microservice architecture benefits is this: since each chunk of functionality is its own application, autoscaling groups will only scale up or down parts that are affected. For example, there is a very popular ICO and a lot of buy requests are going to the blockchain microservice. In this case, the auto scaling group will add an additional instance for this particular group and everything else will be unaffected. In the case of regular monolith applications, an instance for the whole application would be created.

Security Groups

We use a whitelist approach for our security groups — everything is restricted unless specified otherwise. This allows us to increase security, so, in case one of our services gets compromised, it would still have very limited access within the private subnet. For instance — our catalog microservice can’t connect to Ethereum nodes and the Geth client — microservices that don’t have access to the AWS Aurora database won’t be able to connect to it, even if they had credentials.

Database

For MySQL database we are using Aurora. The best things about it are its great backup capabilities and point in time restore. It is great for production use, since it is very easy to restore a database from any time point and provides auto scaling capabilities.

AWS S3 Buckets

We utilise S3 buckets for various things. As stand alone components, they are used as CDN to deliver assets like book covers and ePub files. Other buckets are private and are used for deployments, collecting logs from all microservices in one place and as a source for AWS Lambda serverless applications.

S3 has the functionality to have files with private permissions and generate single use temporary links — this allowed us to securely store epub files and distribute them only when we have made sure that person who requests them should have access to these files and then can’t share his access to anyone else, since it has already expired. To build our own solution for this would be a complicated and lengthy process, but S3 and Laravel had this functionality built in and implementation took only a few lines of code.

Find out more about our Blockchain solutions here!

Share on: