If your eCommerce store uses AI – product recommendations, chatbots, pricing tools, fraud detection – the EU AI Act likely applies to some part of your technology stack.

The regulation entered into force in 2024, with rules rolling out between 2025 and 2027. Companies using AI in the EU will need to understand how their systems are classified and what obligations come with them.

You’re probably asking: “How does the EU AI Act actually affect my store?”

We’re here to answer the key questions about this regulatory act and explain what it means for your business.

How the EU AI Act classifies AI systems

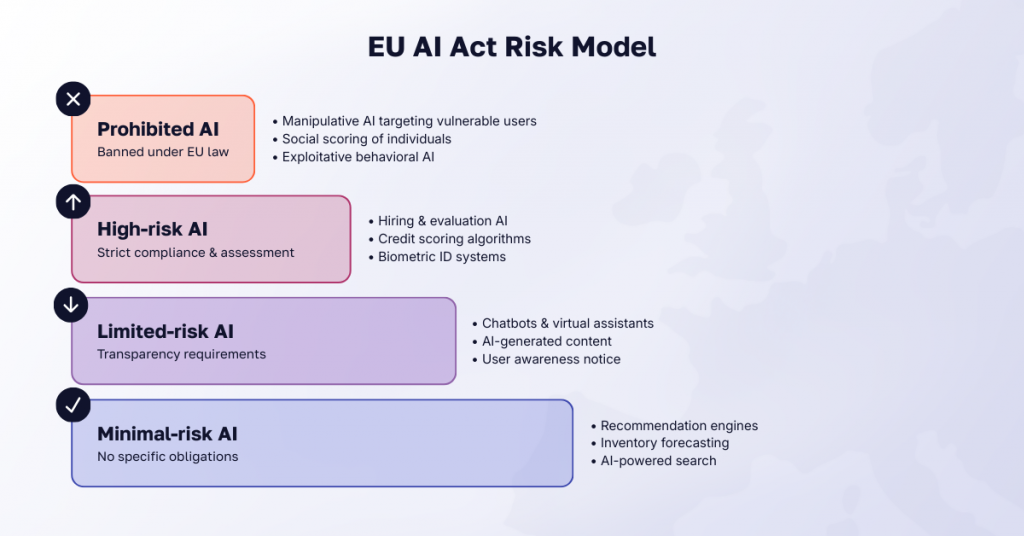

To begin with, it helps to understand how the EU AI Act classifies AI systems. The regulation uses a risk-based model, meaning not all AI is regulated in the same way. Instead, AI systems are grouped into four categories:

- prohibited AI

- high-risk AI

- limited-risk AI

- minimal-risk AI.

Most retail AI tools – recommendation engines, AI search, demand forecasting, and merchandising algorithms – fall into the limited-risk or minimal-risk categories. That means businesses can continue using them, though certain transparency or documentation requirements may apply.

Still, there are grey areas. Dynamic pricing, customer profiling, and AI chatbots are all common features in modern online stores, and each of them interacts with the regulation in slightly different ways.

Below are ten questions eCommerce teams ask most often when trying to understand how the EU AI Act affects their business.

EU AI Act for eCommerce: 10 key questions answered

Does the EU AI Act apply to my Shopify or Magento store?

The EU AI Act applies to companies that use AI systems affecting customers in the EU. That includes eCommerce stores running AI-powered tools.

For a Shopify or Magento (Adobe Commerce) store, the Act becomes relevant when the store uses AI features such as:

- product recommendation engines

- AI-powered search

- chatbots or virtual assistants

- fraud detection systems

- dynamic pricing tools

- personalization algorithms.

In these cases, your business is responsible for how the AI system is used, even if the technology comes from a SaaS vendor.

The good news is that many of these AI eCommerce tools fall into low regulatory categories. In practice, this usually means basic transparency or documentation, not heavy compliance procedures. To understand what compliance may be required, ask these quick questions about each AI tool used in your store:

- Does the AI interact directly with customers?

- Does it make automated decisions about people?

- Does it rely on personal data?

If the answer to any of these is no, it will usually fall into a low-risk category and require little additional compliance.

🚀 Quick takeaway

The platform itself isn’t the problem. Simply review what AI tools are used and document how they work – the most common uses usually fall into low-risk categories with minimal compliance requirements.

Is my AI-powered recommendation engine classified as high-risk?

In most cases, no. Recommendation engines used in eCommerce are typically not considered high-risk AI under the EU AI Act.

High-risk systems mainly involve areas where automated decisions can significantly affect people’s rights or opportunities. For example, hiring decisions, credit scoring, biometrical identification etc.

Typical retail personalization tools do not fall into these categories.

However, there are a few situations worth reviewing. Regulators may look more closely if an algorithm:

- excludes certain groups from seeing offers

- targets vulnerable users with manipulative recommendations

- makes significant automated decisions without oversight.

Even then, these issues usually fall under consumer protection rules or GDPR profiling requirements, not the high-risk AI category itself.

🚀 Quick takeaway

Recommendation engines usually are not high-risk AI. Still, documenting how these tools work and what data they use is a good practice.

Can I still use AI for dynamic pricing in the EU?

Yes. The EU AI Act does not prohibit dynamic pricing.

Under the EU AI Act, most dynamic pricing systems fall into the minimal-risk AI category. That means retailers can continue using algorithms that adjust prices based on demand, inventory, or customer behavior.

Regulators focus on how the pricing algorithm affects customers, not the fact that prices change.

Problems may arise if an algorithm:

- targets individuals with higher prices based on sensitive personal data

- pressures users to buy through manipulative behavioral signals

- hides discriminatory pricing practices.

These situations can trigger scrutiny under consumer protection law or GDPR.

🚀 Quick takeaway

Dynamic pricing itself isn’t restricted by the EU AI Act. Just make sure your pricing algorithm relies on legitimate signals like demand or inventory, not sensitive personal data or manipulative targeting.

What does “profiling clients” mean under the Act, and why is it prohibited?

In simple terms, profiling means using personal data to predict or evaluate a customer’s behavior.

In eCommerce this happens all the time. Stores analyze browsing history, past purchases, and engagement signals to personalize recommendations or marketing messages.

This type of profiling is not automatically prohibited under the EU AI Act.

Regulators become concerned when AI systems manipulate users or exploit vulnerabilities. Examples include systems that:

- target vulnerable users based on age, disability, or financial situation

- push people toward decisions they would not otherwise make

- rank or score individuals based on behavior.

The key difference lies in intent and impact. Personalization that helps customers discover relevant products is generally acceptable. Systems designed to manipulate behavior or discriminate against certain groups can trigger regulatory scrutiny.

🚀 Quick takeaway

Make sure you understand exactly how your AI profiles customers, keep the logic transparent, and avoid automated decisions that could unfairly disadvantage certain users.

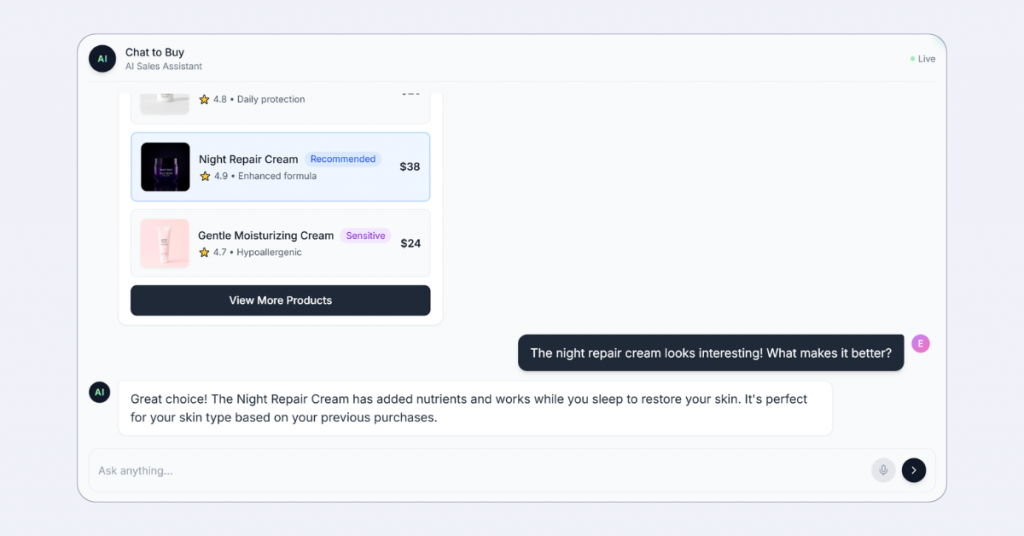

Do I need to disclose when customers are talking to a chatbot?

Yes. The EU AI Act requires disclosure when customers interact with an AI chatbot.

If users could reasonably assume they are speaking with a human, the system must clearly indicate that the interaction is automated.

Compared with other parts of the EU AI Act, this obligation is relatively light. A compliant implementation for AI chatbots and virtual assistants is usually simple:

- label the assistant clearly as an AI chatbot

- show a short disclosure when the conversation starts

- mention AI usage in the help center or privacy notice.

This rule applies regardless of whether the chatbot is built internally or provided by a third-party vendor. If your store deploys the chatbot, your business is responsible for the disclosure.

🚀 Quick takeaway

In most cases you just need to tell users they’re interacting with AI – for example: “Hi, I’m a virtual assistant. I can help you find products or check order status.” A simple message like this is usually enough to meet the EU AI Act transparency requirement.

What are the actual penalties for non-compliance?

The EU AI Act penalties are similar in scale to GDPR and depend on the type of violation.

The maximum fines are:

- Up to €35 million or 7% of global annual revenue for prohibited AI systems

- Up to €15 million or 3% of global annual revenue for violations involving high-risk AI systems

- Up to €7.5 million or 1.5% of global annual revenue for providing incorrect information to regulators.

Regulators apply the higher of the fixed fine or the revenue percentage.

The good news is that most eCommerce AI tools typically fall into minimal-risk or limited-risk AI. In these cases, compliance mainly involves transparency and responsible data use. However, the bigger risk is not the fine itself. It is not knowing which AI systems are running in the business and how they fit into the EU AI Act risk categories.

🚀 Quick takeaway

To reduce the risk of fines, start by mapping all AI tools used in your business and identifying their risk category under the EU AI Act.

How does the Act affect my use of US-based AI tools like OpenAI or Google?

Using AI tools from US vendors like OpenAI is still allowed. What matters is whether the AI system is used in the European market.

That means that you are responsible for how the AI tool is used in your store, even if the technology comes from a third-party vendor.

When using external AI tools, it is worth checking:

- what data the AI system processes

- how the system generates outputs

- whether any transparency or disclosure obligations apply

Many major vendors are already preparing documentation for EU AI Act compliance. Retailers should still confirm how their providers handle data sources, model behavior, and transparency requirements.

🚀 Quick takeaway

Using US AI tools is allowed, but you’re still responsible for how they’re used in your store. Ask vendors how they handle EU AI Act compliance, data sources, and transparency requirements.

What is a “chain of custody” for data, and do I need one?

In simple terms, chain of custody means knowing where the data used by an AI system comes from and how it moves through the system.

This usually involves tracking:

- where the input or training data originates

- how the data is processed by the AI model

- who has access to the data

- how outputs are generated and stored.

Under the EU AI Act, these traceability requirements mainly apply to high-risk AI systems. For most eCommerce use cases, the requirement is relatively light. Retail AI tools typically rely on store data that businesses already control.

The practical step is to keep basic documentation of:

- which AI systems are used in the store

- what data feeds those systems

- which vendors provide the technology

- how the AI outputs affect customer interactions.

Many companies already maintain similar documentation through GDPR compliance and vendor reviews.

🚀 Quick takeaway

Chain of custody means tracking where your AI data comes from and how it’s used – something many businesses already do through GDPR processes.

My AI is purely internal with no personal data – am I still affected?

Usually no. Internal AI systems typically fall into the minimal-risk category under the EU AI Act.

These are use cases such as:

- demand forecasting

- inventory optimization

- warehouse routing

- supply chain predictions

- internal analytics models.

Because these tools support internal decision-making and do not directly influence customers, they are generally considered minimal-risk AI. These are largely unregulated under the EU AI Act. Businesses can continue using them without certification or strict transparency requirements.

That said, companies should still keep a basic record of where AI is used internally. Internal systems sometimes evolve into customer-facing features, such as automated pricing or product recommendations, which can change the regulatory requirements.

🚀 Quick takeaway

n most cases, internal AI is the lowest compliance priority. Focus instead on AI tools that interact with customers or make automated decisions about them.

Where do I start if I want to become compliant?

Start by mapping the AI systems used in your eCommerce stack. Typical places to check include:

- recommendation engines and personalization tools

- search and merchandising algorithms

- AI chatbots or support assistants

- fraud detection systems

- dynamic pricing tools

- marketing automation platforms using predictive models.

Once these systems are identified, classify them according to the EU AI Act risk categories: prohibited, high-risk, limited-risk, and minimal-risk.

Most retail AI tools fall into limited-risk or minimal-risk, which usually require transparency or documentation rather than strict regulation.

After classification, review three areas:

- Transparency – customers should know when they interact with AI

- Data usage – understand what data feeds the system

- Vendor responsibilities – confirm how third-party AI tools handle compliance.

🚀 Quick takeaway

EU AI Act compliance usually starts with mapping the AI tools in your stack and documenting how they work. If you’re unsure how your systems fit into the regulation, consulting with a compliance or AI specialist can help clarify the next steps.

Final thoughts

The EU AI Act does not aim to stop companies from using AI in eCommerce. They want companies to understand where AI influences people and to be transparent about it.

For most retailers, the real work is not removing AI tools or slowing innovation. It is knowing which systems you run, what data they rely on, and where automated decisions affect customers. Once that visibility exists, compliance becomes part of normal governance – much like GDPR did a few years ago.

If you want a quick review of how the EU AI Act applies to your eCommerce AI tools, talk to our AI consultants. Contact us, and our team can map your AI systems and flag the areas that may require attention.

Share on: