Part Two: Services and Microservices

In the previous part, we explored the intricacies of the Publica infrastructure. We continue with the central microservices, as well as services constituting the architecture.

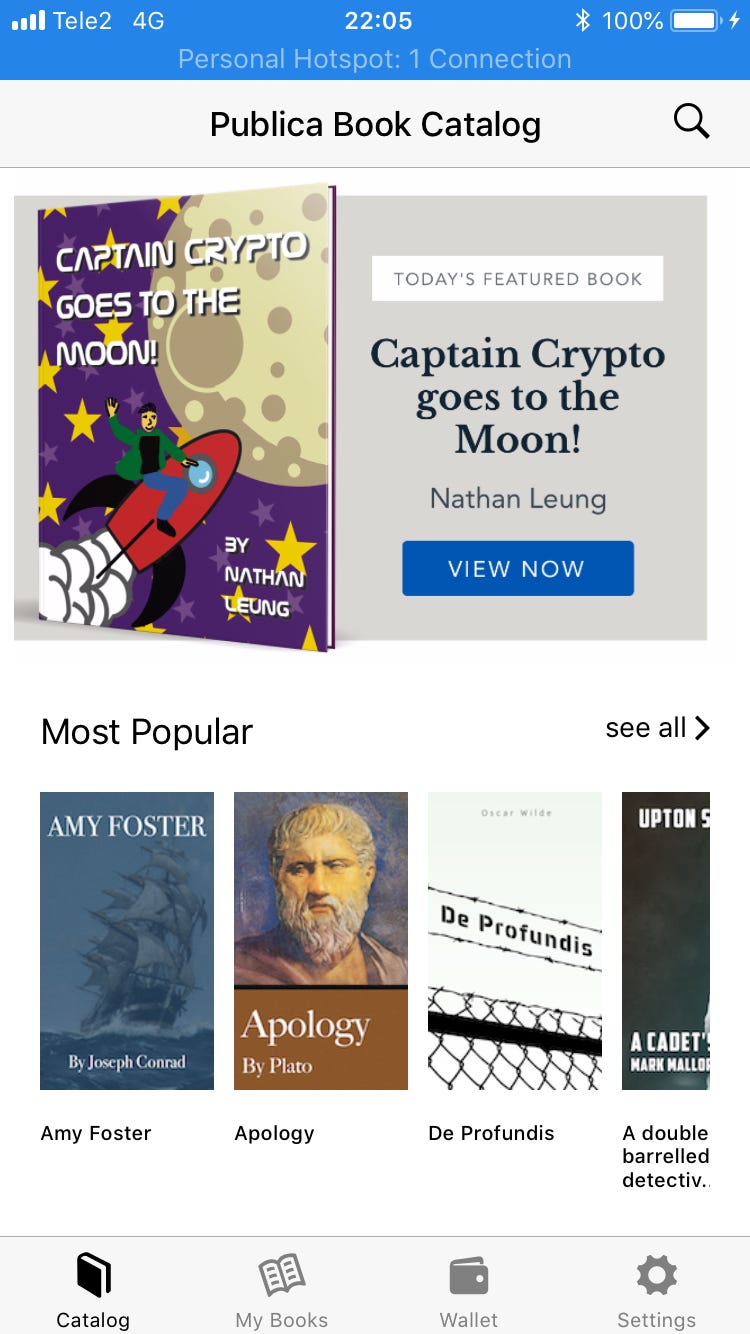

Catalog Microservice

The catalog microservice is built on top of the Laravel framework. Its main purpose is to store and serve the book catalog to mobile apps and provide a 2nd layer caching for user balances.

We chose Laravel for several reasons. We were sure that for the catalog and flat data structures we want to use MySQL — Laravel have Eloquent which makes working with MySQL and data relations quick and easy.

Regarding data caching, blockchain does not have indexed data and you can’t query collections like in a regular database, so to increase performance we had to cache book purchases somewhere, so the user doesn’t have to wait several minutes to get his purchased books every time the catalog is reloaded.

Blockchain Interaction Layer Microservice

We are using the Geth client for interaction with the Ethereum network. Geth itself has a built-in API which works with HTTP requests, but making curl requests is not as friendly for development as using libraries. Currently, good libraries are available only for the JavaScript language, so our blockchain interaction layer microservice is built as a node.js app on top of the express framework.

Its main purpose is to read and write data from the Ethereum blockchain, through the Geth client. Any action that involves the Ethereum network, involves a request to this microservice. For example, when a reader wants to download a book, he signs with his private key random string and asks the catalog MS for the epub file, catalog MS then forwards the signature to the Blockchain MS and it validates token ownership.

Passport service

One of the biggest downfalls with microservices is poor user and role management — a good authentication service has to be API based, otherwise, you end up creating accounts in every single microservice. For this, we choose to implement Laravel Passport, which is a token-based API authentication.

It is work in progress, but we hope to achieve a single user repository, which will be capable of managing admin, author and API users for setting up permissions and roles.

Caching service

Blockchain does not work as a regular database and often you have to do multiple requests to retrieve our readers’ book tokens. With a growing book count on our platform, this will become an issue soon, so we built a MySQL caching service on top of the blockchain to retrieve all user tokens as a collection with one single request, rather than crawling each smart contract individually.

Implementing caching was not as smooth as we hoped. At first, it was very simple and implemented within the catalog microservice. There were multiple change requests and the caching functionality grew bigger to a point where (still coupled with the catalog microservice), it should really be decoupled and become its own microservice.

Luckily for us, with loosely coupled infrastructure, it is very easy to rewrite parts and integrate new functionalities, so one of the next items in our roadmap is to create a caching microservice and migrate the functionality from catalog MS to cache.

Oracle

Hidden deep inside our private subnet is the Oracle service. Its purpose is to update the PBL exchange rate in smart contracts every day. This is required so we can have book prices in fixed dollars rather than them fluctuating due to PBL token volatility.

The Oracle service doesn’t have any inbound or outbound access — it is inaccessible and consists of a node.js application with a cron job to fetch the current exchange rate for PBL tokens and write this value in the smart contract. It’s a single purpose load balancer that routes traffic from the Oracle app to the Geth client. The Geth Ethereum node and Oracle app are built as autoscaling groups as well — in case they go down, the auto-scaling group will create a new instance so Oracle can work without interruptions.

Other services and Tools

When working with blockchain, you have to remember that it is extremely new technology — there is a lack of documentation and tools, so you have to build your own tools sometimes. One of the tools we use daily is a simple application that shows and warns us about our Ethereum account balance. Useful in cases where, for example, the wallet used by Oracle is low on Ether for transactions.

Another tool is a docker container with Ethereum testnet and many helper functions for things like PBL token contract deployment and calling most used smart contract methods for easier testing and debugging.

Geth clients

Setting up the Geth client for a High Availability environment was very painful. We experimented with various setups and we are still experimenting. We started with a very basic setup — c5.large instance and 100GB SDD. It worked for some time, but later started crashing and, in the end, one Geth node went corrupt due to RAM being too low. In other instances, the Geth client ran out of free space.

Then we tried to set up a c5.large instance with increased swap file size and 200GB of free space. It sort of worked but was not able to sync with the Ethereum network for about a week due to swap being too slow for R/W operations.

Our backup strategy was to create AMI. Full node and autoscaling groups use this AMI — if it ever goes down, a new instance is launched, which is slightly outdated and should sync in minutes. In reality, it took way longer. In the end, we settled on light nodes for now, since it provides the best performance when the node goes down and the autoscaling group needs to replace it. But it still isn’t perfect, sometimes it takes hours for a light node to find peers, other times it takes seconds.

The Geth node setup is fairly simple — install the Geth client and supervisor which makes sure that the Geth process is always running. Our service Ethereum account is imported in Geth and at that point, the AMI is created, which is then used by the autoscaling group.

User Interface — Mobile e-Readers and Shop

Our user interface consists of Android, iOS mobile apps that serve as a wallet, store, and e-reader. Mobile applications are built with react-native, which is a javascript framework that is able to compile javascript code into iOS and Android application packages. The idea was to have a single codebase for all mobile platforms.

The reality, once again, was not that great. The first issue was with the e-reader library that is used for epub files. There aren’t any open source libraries that work on both devices correctly and we had to implement some changes to support both platforms. The next problem occurred when we tried to submit our app to the Apple store — their policy forbids any digital purchases through other payment methods other than Apple Pay.

Of course, they don’t support cryptocurrencies, so in order to get approved in the Apple Store we had to remove the buying functionality from our iOS app, but for Google Play store this was fine, which resulted in even more changes. Our solution for iOS users is that we built a serverless website which works with the Metamask wallet and they have to buy books there for now.

Another part of the user interface is the Book ICO platform which is currently under development.

Conclusions

Microservices, in our case, have proven to work really well with reusability. Some parts are used in almost every other microservice and they are compatible regardless of platform. Another great advantage we see is code delivery — we can work on different parts of the platform without blocking each other and deliver as soon as we are done. This was not possible with monolith apps and scheduled deployments.

Of course, not everything is as sunny. Microservice architecture has significant overhead since you have to manage a lot more servers and deployments. Luckily, there are tools like Terraform, which partly solve this issue. Another disadvantage is the infrastructure cost. It costs significantly more to run a lot of servers for microservices rather than 1 server for a single application. This can be solved with dockerization and Kubernetes, but that is something for the future.

Find out more about our Blockchain solutions here!

About Scandiweb

Leaders in eCommerce, Mobile, and Startups. Since 2003 Scandiweb enables digital strategy for bootstrapping startups and established brands such as Jaguar Land Rover, Peugeot, New York Times, Reuters, JYSK and 400 other customers in 35 countries. Your digital strategy goals are backed up by Scandiweb capabilities in KPI management, MarTech, Creative, and Technology. Learn more about Scandiweb’s work at www.scandiweb.com or contact our CEO at [email protected]

Share on: