Every AI vendor, every data platform, every dev tool, and every commerce platform in 2026 ends the pitch with “we support MCP.” If you are the person on the other side of those pitches, and you still don’t have an internal frame for what MCP actually changes about your stack, this guide builds the frame. It covers what MCP is, how it differs from an API, and what it unlocks across developer tools, customer support, internal data, and commerce.

scandiweb already ships MCP-based AI agents in production, including the Claude Blog Factory and an automated PPC audit agent, so the view here is grounded in what we run.

What is MCP? A working definition

MCP, short for Model Context Protocol, is the open standard that lets AI agents read live data and run commands across external tools in a way every major model understands. Anthropic introduced it in November 2024, and it is now governed by the Linux Foundation’s Agentic AI Foundation. MCP is what allows agents in ChatGPT, Claude, Copilot, Cursor, and Gemini to reach codebases, support systems, data warehouses, and commerce stacks through one common interface.

MCP does three things at runtime. It lets an agent discover the tools and data a system exposes. It lets the agent read live data in a typed shape any compliant model can interpret. And it lets the agent take action, while the server enforces what is allowed.

You will hear MCP described as USB-C for AI. The cleaner framing: when an agent inside Cursor needs to find every callsite of a deprecated function, it does not query your repo directly. It talks to a Git MCP server. Any other compliant agent could have asked the same way. Underneath, MCP rides on JSON-RPC 2.0.

🚀 Quick takeaway

MCP is the integration protocol AI agents will use to act on systems the same way browsers use HTTP to act on web servers. Treat it as infrastructure, not a feature.

What does MCP stand for, and who is behind it

MCP stands for Model Context Protocol. Anthropic introduced it in November 2024. OpenAI adopted it in March 2025. In December 2025, Anthropic donated the protocol to the Linux Foundation’s Agentic AI Foundation, a directed fund co-founded with Block and OpenAI. Today, Anthropic, OpenAI, Google DeepMind, and Microsoft all natively support MCP, with over 500 public MCP servers available by early 2026.

That governance shift also matters for European organizations: an open, Linux Foundation-stewarded standard aligns more cleanly with EU digital sovereignty requirements than a single-vendor protocol, which is why MCP’s open architecture is increasingly named in European procurement conversations.

The governance handoff matters. MCP is no longer an Anthropic project that other vendors agreed to play along with. It is a neutral standard with shared ownership, the same posture HTTP and TCP had once they became infrastructure.

Architecturally, MCP solves the M×N integration problem. Before MCP, connecting every model to every tool meant a custom connector per pair. With MCP, every model implements the protocol once and every tool implements it once. M×N work becomes M+N. That is why the standard caught so quickly.

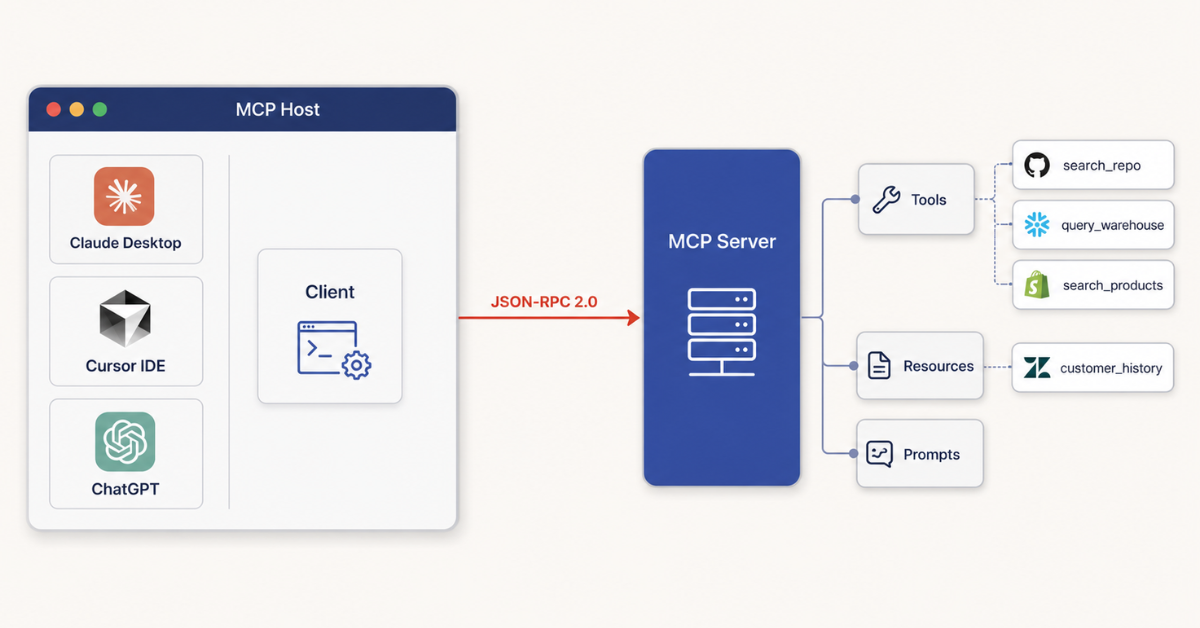

How MCP works: hosts, clients, servers, and three primitives

APIs are built for developers to call from code. MCP is built for AI agents to call at runtime. An MCP server exposes typed tools, read-only resources, and reusable prompt templates that an agent discovers on its own. The agent never sees API keys or raw endpoints, only the safe tools the server allows.

MCP architecture has three roles:

- Host. The AI app the user interacts with, like Claude Desktop, ChatGPT, Cursor, or Windsurf. The host runs the LLM and decides when to call out.

- Client. A process inside the host that maintains a one-to-one connection to a specific MCP server.

- Server. A service you control that wraps part of a system and exposes capabilities to agents.

An MCP server offers three primitives:

- Tools. Executable functions, like

search_repo,create_ticket,query_warehouse, orsearch_products. The agent decides when to call them. - Resources. Read-only data the agent consumes for context, like a customer history record or a pricing rule.

- Prompts. Reusable templates for how the agent should interact with a system, like the wording it uses to confirm an action with side effects.

The same shape works across industries. A GitHub server exposes search_repo. A Zendesk server exposes read_ticket and draft_reply. A Shopify Storefront server exposes search_products and cart_lines. What changes per system is which capabilities are safe to expose.

Read more: Claude Blog Factory using MCP — a production MCP host, client, and server flow with real names on the boxes.

What is an MCP server, exactly?

An MCP server is a small service you control that wraps part of a system and exposes specific tools to AI agents in a standard way. A GitHub server lets an agent search a repo and edit files. A Zendesk server lets an agent read tickets and draft replies. A Snowflake server lets an agent run scoped warehouse queries. A Shopify Storefront server lets an agent search products and build carts. The server validates each call and decides what the agent can and cannot do.

You will deal with two flavors: public MCP servers shipped by vendors or the community (hundreds exist by 2026 for the major SaaS systems), and custom servers you build for systems your vendor does not cover. The right starting point is checking what is already published for your stack before building.

🚀 Quick takeaway

An MCP server is not “another API”. It is the agent-facing interface your stack will be judged against in 2026.

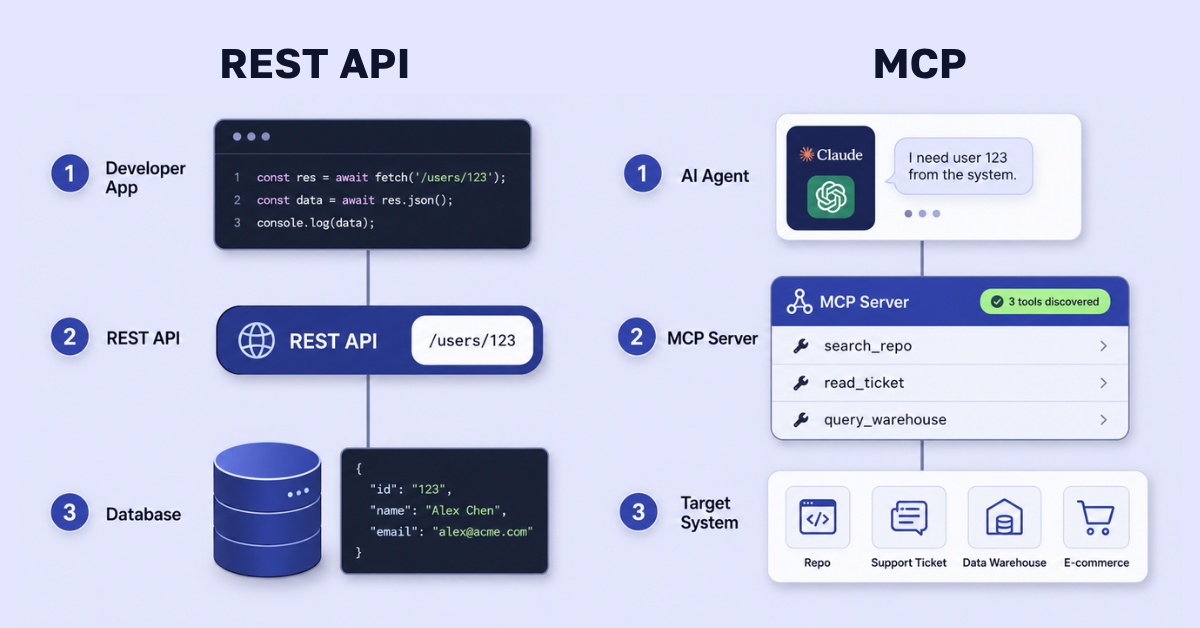

MCP vs API: what is actually different

An API is a contract between developers and a system. MCP is a contract between an AI agent and a system. The difference is who is calling, why, and what they can see.

| Dimension | REST API | MCP |

|---|---|---|

| Purpose | Software-to-software integration | Agent-to-system interaction at runtime |

| Caller | Application code that knows the endpoints in advance | An AI agent that discovers what is available at runtime |

| Discovery | Documentation, OpenAPI specs, manual setup | The server announces its tools, resources, and prompts |

| Authentication | Keys, OAuth, signed requests handled by the calling app | Handled inside the server, so the agent never sees secrets |

| State | Usually stateless, every call is independent | Stateful sessions, so the agent carries context across actions |

| Best for | Predictable, code-defined integrations | Agentic workflows where the AI decides what to do next |

MCP does not replace APIs. It sits on top of them. An MCP server you build almost always calls your existing REST or GraphQL API internally, then translates the result into an agent-friendly shape. scandiweb’s PPC audit agent, for instance, calls the Google Ads API through an MCP server, which keeps the API key off the model.

So “MCP vs API” is the wrong question. The real one is which of our APIs should be wrapped in MCP servers, and which capabilities those servers should expose to which agents.

🚀 Quick takeaway

MCP does not replace your APIs. It sits on top of them, translating API calls into an agent-readable surface that hides the secrets.

Why MCP matters now: the agentic AI shift

MCP matters because every major AI app in 2026 supports it. ChatGPT, Claude, Copilot, Cursor, and Gemini all read MCP servers natively. The standard is governed by the Linux Foundation. Over 500 public servers existed by early 2026. For any team planning AI agents, MCP is now the integration layer your stack will be measured against.

This is the shift specialists call agentic AI. A year ago the framing was “AI-powered features”, an LLM in a sidebar. Today the framing is “agents that act”: a model that reads your data, decides what to do, and runs commands across your tools without an engineer in the loop. That is what MCP enables.

The implication is clear. If your systems are not reachable by an agent through MCP by the time users expect ChatGPT, Claude, or Copilot to do work on those systems, you are not behind on AI. You are missing from the workflow.

Read more: Agentic commerce explained — the customer-facing version of the same shift.

🚀 Quick takeaway

If MCP is moving from headlines to roadmap inside your team, scandiweb’s AI growth accelerator is where our clients usually start, with a structured audit of what MCP changes in their specific stack.

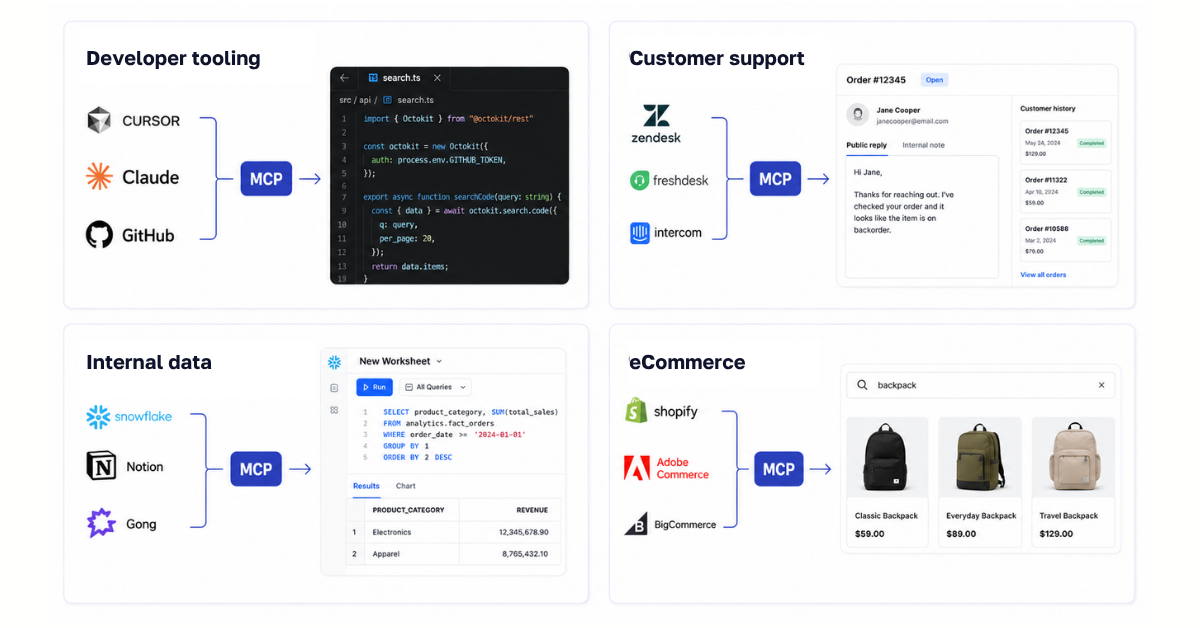

MCP use cases across industries

MCP unlocks seven core use cases for AI agents: (1) developer tooling, search and edit code through GitHub or Cursor servers; (2) customer support, triage and draft replies through Zendesk or Intercom; (3) internal data access, query Snowflake, Notion, or Confluence; (4) eCommerce, read catalog, build carts, and check inventory through Shopify or Adobe Commerce; (5) content and marketing operations, draft and audit content; (6) sales and CRM, enrich accounts and prep meetings; (7) security and IT ops, triage alerts and run runbooks.

The boundary line matters as much as the list. Irreversible writes, PII writes outside policy, payments above a merchant-set threshold, and anything that crosses your security policy or EU AI compliance obligations belong behind human-controlled review, not an agent tool. For eCommerce teams trying to draw that boundary in practice, our guide to the EU AI Act for eCommerce walks through which AI uses fall into which risk tier and where the prohibited-practice line lands for a typical store. The MCP server enforces that by not exposing the dangerous capability. Do not rely on the agent to behave. Rely on the server to refuse.

🚀 Quick takeaway

The right MCP scope is not “everything an API can do”. It is “what an agent can do unsupervised, given your policy”. Start narrow, expand as you learn.

MCP across industries: developer tools, customer support, internal data, and commerce

The honest picture as of mid-2026, with four sub-sections at equal weight.

MCP in developer tooling

Developer tools were the first place MCP went mainstream. Cursor, Claude Desktop, Windsurf, and Zed shipped support in 2025; GitHub Copilot added it in 2026. Public servers exist for GitHub, GitLab, Linear, Sentry, and PagerDuty. A real flow: a developer asks Claude inside Cursor to find every callsite of a deprecated function and propose a refactor. The agent reaches the codebase via a Git server, reads related issues via a Linear server, and proposes the change as a draft pull request. scandiweb’s Blog Factory is a production MCP-based agent that runs content-engineering workflows the same way.

MCP in customer support

Customer support is where MCP most directly affects unit economics. Zendesk, Intercom, and Salesforce Service Cloud are integrating MCP-compatible interfaces in 2025–2026 for AI-drafted ticket replies and handoffs. A real flow: a customer asks about an order on a support chat. The agent calls a Zendesk server to read the ticket and customer history, then a billing server for order status, and drafts a reply the human reviews. The server lets the agent read tickets, draft replies, and tag issues, but not close or refund them without a human. The point is compressing the read-and-draft cycle, not replacing the team.

MCP in internal IT and enterprise data

Internal data is where the enterprise case lives. Public servers exist for Slack, Notion, Linear, GitHub, Snowflake, Databricks, Confluence, and Google Drive, with more landing every quarter. A real flow: an analyst asks Claude to “summarize last quarter’s churn drivers across Snowflake, Notion, and call transcripts.” The agent calls a Snowflake server for the numbers, a Notion server for the strategy doc, and a transcript server for the call data, then synthesizes. The honest gaps in 2026 are auth, audit trails, and multi-tenant scoping. Teams getting value today treat the MCP server as the policy boundary, not the agent’s promise to behave.

MCP in eCommerce and retail

eCommerce moved fast because the platforms did. Adobe Commerce made MCP the default agent protocol in 2026, with built-in catalog, cart, pricing, and inventory exposure. Shopify shipped four MCP servers by the Winter ’26 Edition (Dev, Storefront, Customer Account, Checkout) and co-developed the Universal Commerce Protocol with Google at NRF January 2026. On March 24, 2026, Shopify Agentic Storefronts went live by default for US merchants, making 5.6 million stores discoverable inside ChatGPT, Copilot, Google AI Mode, and Gemini. A real flow: a shopper in ChatGPT asks for “black running shoes under $120 in size 11 that ship by Friday.” The agent calls a Shopify Storefront MCP to query the catalog, filter by inventory, check shipping, and build a cart. For teams considering a custom build, see our Shopify eCommerce development services.

Read more: Top AI agencies — the agency landscape behind MCP-ready stacks, for teams deciding who builds.

🚀 Quick takeaway

The platform question is not “does it support MCP”. It is “what does it expose by default, and where will we still need custom servers”. Almost every stack ends up with both.

When MCP fits your roadmap, and when it does not

For most teams the question is not whether to adopt MCP, but when. The honest test has three parts: a real agent use case waiting on it, systems that can support it cleanly, and a team that can carry both the build and the security work. If none of those is true yet, track MCP but do not build toward it this quarter. Build too early and you rebuild when primitives evolve. Wait too long and you show up in 2027 to agent traffic your stack cannot serve.

Use MCP now if:

- You have an AI agent use case committed for FY26 (developer copilot, support agent, internal data agent, customer-facing storefront agent, or ops automation).

- The systems you would expose ship MCP servers today or have clean APIs ready to wrap.

- You operate three or more disparate systems an agent would need to coordinate across.

- You want your stack reachable from ChatGPT, Claude, Copilot, Cursor, or Gemini without a per-vendor custom integration.

Wait on MCP if:

- No AI agent use case is committed past proof-of-concept.

- The systems you would expose lack stable APIs, and an MCP wrapper would inherit that instability.

- The MCP primitives your use case relies on (elicitation, resource links, structured outputs) are still in active spec evolution.

- The team carrying the build cannot also carry the security work that MCP servers require.

Frequently asked questions about MCP

What does MCP stand for in AI?

MCP stands for Model Context Protocol. It is an open standard introduced by Anthropic in November 2024 and governed by the Linux Foundation’s Agentic AI Foundation since December 2025. It gives AI models a single way to connect to external tools, data, and systems, so a model can read live data and take actions without each integration being custom-built.

What is MCP in plain language?

MCP is the protocol that lets an AI agent ask a tool a question and run a command on it, in a way every major model and every compliant tool understands. Think of it as USB-C for AI. With MCP, any compliant agent can call any compliant tool, including codebases, support systems, data warehouses, and commerce stacks.

How is MCP different from a regular API?

An API is built for developers to call from code. MCP is built for AI agents to call at runtime, on their own. MCP servers expose typed tools an agent can discover and invoke without prior hardcoding, and keep API keys and unsafe calls hidden behind the server. APIs do not give an agent that safety layer.

What are MCP servers used for?

MCP servers expose specific capabilities of a system to an AI agent in a standard way. The common categories are developer tools (code search, file edits), customer support (ticket read, draft reply), internal data (warehouse queries, document retrieval), and commerce (catalog, cart, pricing, inventory). The server stays in your control.

Is MCP an Anthropic product?

No. Anthropic created and open-sourced MCP in November 2024 and donated it to the Linux Foundation’s Agentic AI Foundation in December 2025, a directed fund co-founded with Block and OpenAI. Anthropic, OpenAI, Google DeepMind, and Microsoft all support MCP natively. It is a multi-vendor standard.

How does MCP work, step by step?

An AI host (Claude Desktop, Cursor, ChatGPT) opens a client connection to an MCP server. The server lists the tools, resources, and prompts it offers. The agent picks a tool, sends a typed request over JSON-RPC 2.0, and the server runs the action and returns the result. The agent never sees secrets or anything the server has not exposed.

Do I need to build my own MCP server?

Often no. Hundreds of public servers exist by 2026 for GitHub, Slack, Snowflake, Notion, Linear, Zendesk, Shopify, and many other systems. You typically build custom servers only for proprietary systems, like an internal PIM or a domain-specific workflow. Check what is already published before building.

Is MCP secure?

MCP is as secure as the server you put behind it. The protocol keeps secrets on the server side, so the model never sees API keys. The real security work sits in the server: scoping each tool, validating inputs, rate-limiting, and authenticating the agent. Treat it like any public API, with one extra rule: assume the caller is an LLM and constrain accordingly.

The bottom line on MCP

MCP is no longer a forecast. It is the protocol your AI agents will be measured against in 2026. The hosts have shipped, the major vendors have shipped servers, and the systems your team uses every day, from GitHub to Zendesk to Snowflake to Shopify, are already reachable through MCP. What separates the teams that get value from the teams that ship orphan demos is how tightly they scope what their agents are allowed to do.

The starting move is rarely “build everything at once”. Pick the agent use case with a real business reason behind it, wrap the systems it needs, scope the tools tightly, ship, and iterate.

If you want to build with MCP and the question is where to start, talk to our team and we will scope it with you.

Share on: